Reaching AI Limits (and Ending Up on StackOverflow Again)

Some thoughts about public data quality degradation. Contributing to the public domain for the sake of humanity?

I’ve been walking with this idea for a while: I found that it still makes sense to contribute 𝐧𝐨𝐧-𝐀𝐈-𝐠𝐞𝐧𝐞𝐫𝐚𝐭𝐞𝐝 𝐤𝐧𝐨𝐰𝐥𝐞𝐝𝐠𝐞 to the public domain, for a very simple reason – it now works not only to help humans, but to 𝐭𝐞𝐚𝐜𝐡 𝐀𝐈 𝐭𝐡𝐚𝐭 𝐬𝐞𝐫𝐯𝐞𝐬 𝐡𝐮𝐦𝐚𝐧𝐬.

Also, it kinda overlaps with my frustration around edge cases when LLMs don't help, but rather 𝐩𝐥𝐚𝐲 "𝐜𝐨𝐧𝐟𝐢𝐝𝐞𝐧𝐭𝐥𝐲 𝐰𝐫𝐨𝐧𝐠".

I use AI daily, it boosts my productivity. Until it doesn't.

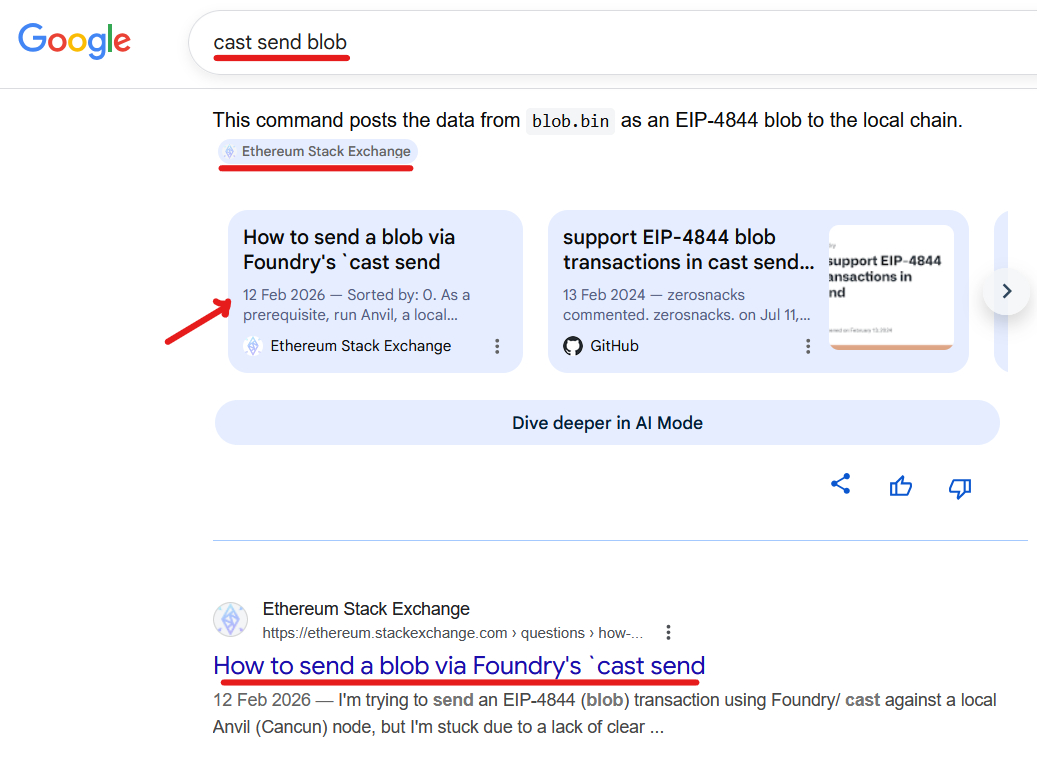

Look at this trivial case: try searching the "cast send blob", and you'll find my self-answered StackOverflow post at the top.

Why is it at the top, and why is it self-answered?

That post exists for a simple reason: AI failed me on a trivial edge case, wasting a few hours ⏳ of engineering time.

ethereum.stackexchange.com is a StackOverflow for the Ethereum-related topicsOnce I found a solution, I decided to contribute to public knowledge, thus posting a self-answered solution, allowing the search engine to index it and filling the "knowledge gap".

But again...

Why did the issue appear at the first place?

Fast forwarding: Nobody asks publicly → Nobody answers → AI knows nothing.

Once the article appears publicly, Google indexes it, and it quickly appears at the top. Now, AI picks it up and even partially uses my wording.

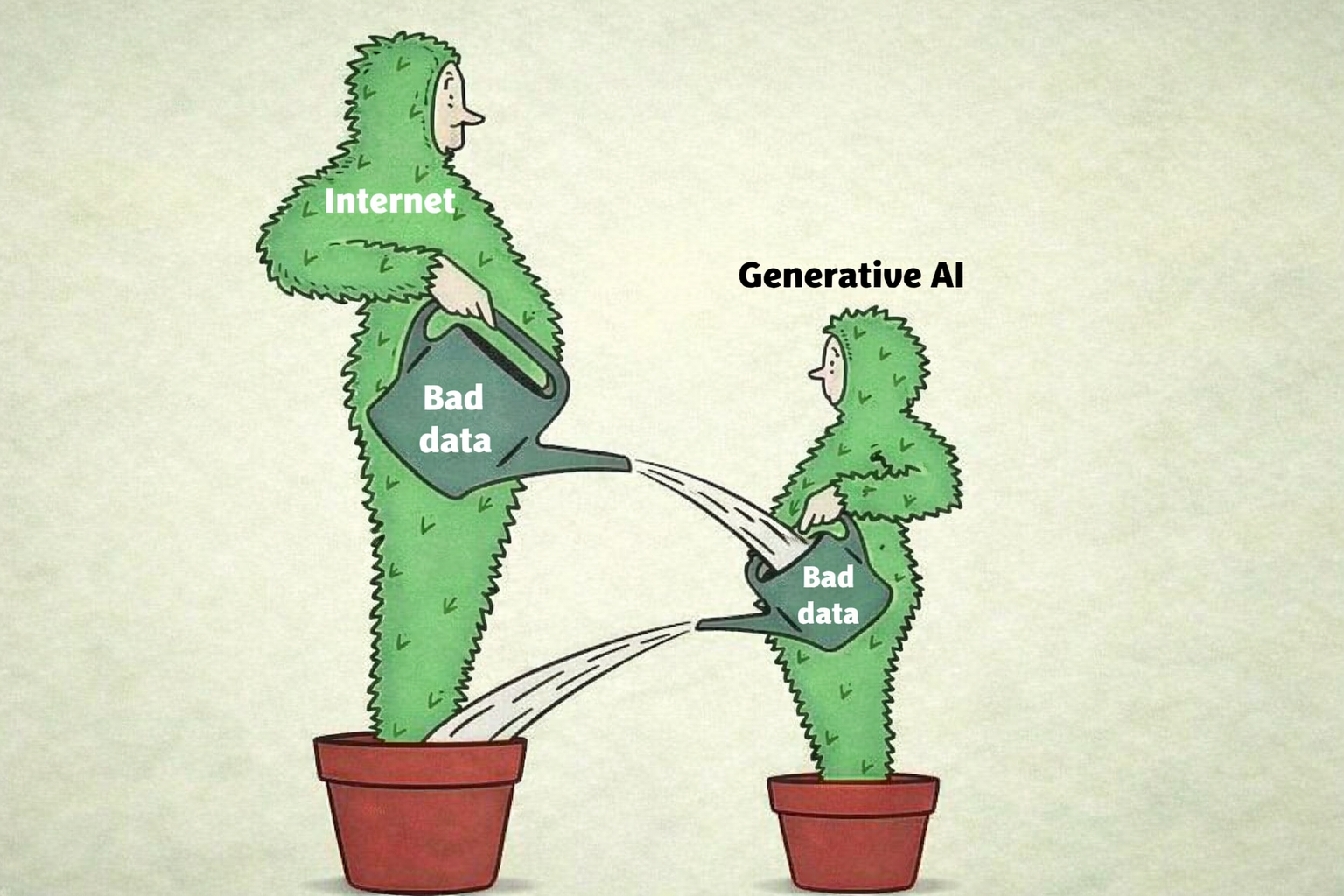

Public Knowledge Is Decaying

What happens is that in the current circumstances, when everybody is locked in their AI microcosms, information stops recycling through the public domain.

It's well-known that "StackOverflow is dying" (as for 2026), etc.

I'm trying to see it differently.

As the internet floods with AI-generated code, the "Solved questions" on StackOverflow are transitioning from "Help Desk answers" to "Ground Truth." Human validation is becoming the premium service.

(from the article here: https://gist.github.com/tanaikech/30b1fc76da0da8ff82b91af29c0c9f83)

The incentive to contribute to public knowledge has shifted. Now it is less like helping people directly and more like feeding future models that everyone will use.

AI Wastes Engineering Time at the Edges

- I spent over an hour ⏳ asking different LLM models from different vendors how to send a blob via the

casttool. All models answered confidently – and all were wrong. They invented CLI flags, hallucinated parameters, and ignored requests for up-to-date documentation. The real solution took minutes ⏳: I stopped prompting and readcast --helpcarefully (and then I posted that self-answer). - The other day, a similar thing happened with LogQL (a query language for Grafana Loki). After an hour or two ⏳ of back-and-forth with AI, I gave up, opened the official docs, and composed a proper LogQL query myself.

This happened to me a few times.

LLMs fail similarly at edge cases.

Edge Cases Break LLM Reasoning

LLMs are trained on public texts (particularly). When edge cases aren't documented, models struggle to find correct solutions and often hallucinate non-working answers overconfidently.

This failure mode is kind of invisible. If you already know the tool or the domain well, you'll notice when the answer smells wrong and go read the docs. If you don't, you might just keep trying random variations of a hallucinated solution, assuming you're the one missing something.

That's not great, especially for less popular tools, smaller projects, or newer ecosystems. It's wastes engineering time, and it adds up. The problem isn't that the models don't know. It's that they don't signal uncertainty. The former might be fixable – models can be taught to say "I don't know". The dependency on public knowledge is a different thing, though. It's not easy to "fix".

The conclusion here is that both me and AI end up relying on static non-conversational public knowledge.

In a way, this brings us back to a familiar place: contributing to public knowledge suddenly matters. It feels like contributing to the public domain matters not only to humans but also to AI serving humans.