CI/CD + SAST: Expectation vs Reality

It's often hard to deliver security scan results synchronously, blocking the merge as a consequence of security verification. And you don't want to pass the vulnerable code to the release.

Expectation

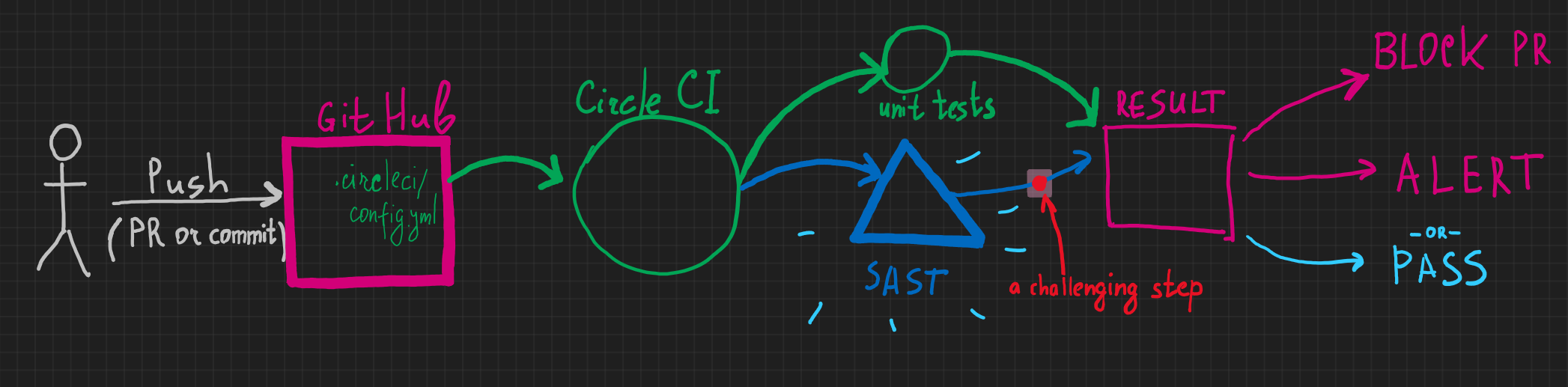

Imagine, you set up your perfect DevSecOps pipeline.

⏩A developer pushes source code to a favorite source version control system,

👷♂️ which activates a Continuous Integration (CI) pipeline

🔬 which starts security testing,

🦠 then it finds a critical vulnerability,

🛑 which, in turn, stops the entire pipeline and the developer goes fixing the issue.

Perfect, the security toolchain has just prevented a disaster! You have just saved the world (!) from releasing a vulnerable version of the product.

The perfect outcome is to block the CI pipeline until addressing all the identified issues.

Reality

🔨You integrate a tool of your choice into the CI/CD pipeline,

📝 it gives you a ton of false positives,

🤯 you start triaging,

🤬 developers are irritated, they can't wait because the product release is scheduled "for yesterday",

😒 you "temporarily" disable the integration until you can 🧹 clean up the security testing report,

🥵 and it never ends.

SAST means a Static Application Security Testing tool.

A security scan is usually required for Pull Request verification before merging the code or after the master branch commit. You don't want to pass the vulnerable code to the release.

However,

- What if it finds 1000 false positives (wrong findings)?

- What if takes 1 day to finish the analysis?

- How does the tool decide that the detected vulnerability is critical?

- How does it decide whether the finding is an actual vulnerability at all?

- What are the criteria for stopping the pipeline?

- How long does it take to analyze the code?

Challenges

At the end of the day, it's often hard to deliver scan results in a synchronous (blocking) way.

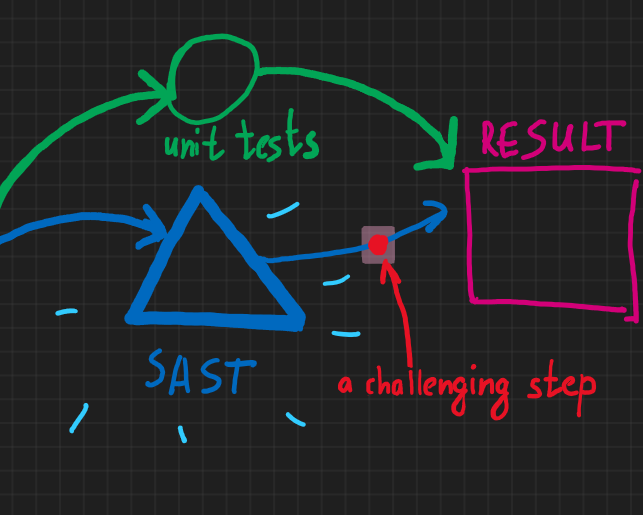

I would say, there is a challenge of synchronous vs asynchronous security testing.

Why it's often hard to deliver security scan results into CI/CD pipeline in a synchronous (blocking) way?

- Legacy code with numerous issues (with a huge SAST output)

- “Unclean” source code may result in a lot of security findings blocking a pipeline entirely until fixing/dismissing all the findings.

- Scanning time

- Different SAST tools have different efficiencies in terms of time and findings.

- A huge code base may lead to slow scans (from 1 to a few hours).

- What we also found is that on-the-cloud solutions usually have a little bit slower and/or unpredictable scan time (depending on the SLA of the vendor).

- SAST tool limitations

- Some tools have either rich or poor capabilities to report the scan result, e.g. a tool may have a true positive result having no means to report the scan result back immediately.

- SAST tools may have specific requirements for the project structure and build steps.

- Triaging: tools always generate false positives, there should be a way to conveniently fix and dismiss the security finding by a developer with

cross-validation by a security specialist. The challenge is having a pipeline that supports the business (not completely blocking the business).

- Budget compromises

- There are sophisticated tools on the market, however, budget limitations may play a major role in what the team can afford.

- Filtering commits

- Scans usually run only for commits and PRs to master and release branches.

Solution

Incremental Integration

In practice, the solution here is to carry out a multi-stage SAST integration approach.

- Stage 1: Baselining

Tools are purchased, a source code is initially scanned, and false positives are reviewed/dismissed. Tickets are created, and vulnerabilities are fixed and submitted. - Stage 2. Initial asynchronous integration

SAST is integrated into a CI/CD pipeline, however, scan results don’t block the pipeline (yet). The team executes an exercise of triaging and fixing the issues. - Stage 3. Synchronous integration

SAST blocks the pipeline in case of critical findings (according to the security policy). In the ideal scenario, a Jira ticket is automatically created.

The last step is quite challenging because of false positives and because of all the challenges mentioned above. With some tools, e.g. gosec for Golang, synchronous integration is easily achieved and is the only way of dealing with the commit (the tool is very quick and doesn’t produce a lot of output usually).

Having more sophisticated on-the-cloud tools (Coverity, Fortify-on-Demand, Veracode, etc.), we go with a hybrid model where we have asynchronous SAST scans for less critical projects, and synchronous scans in more critical cases wherever it’s possible (if it is technically and organizationally possible).

Triage

We have to come up with a few levels of issue severity. The simplest categorization is the following:

- Severity 1 (Highest) implies the quickest mitigation and release (1-2 days) for the most critical issues.

- Severity 2 (Middle) means no direct impact and implies slower mitigation (say, 30 days).

- Severity 3 (Lowest) with 3 months for mitigation.

Different classification schemes may use up to 5 severity types implying different severity properties.

Release

There are 2 major conditions to pass the build to the release:

- All issues are fixed.

- The security team manually allowed the release of an urgent hotfix. It happens that SAST considers minor findings as the major ones falsely. Usually, SAST tools provide an ability to pass the build with a dismissal comment by a security specialist (appsec, security lead) of why the build is allowed to be released.

Summary

Do integration of security tools incrementally in 3 stages: baselining, initial asynchronous integration, synchronous (blocking) integration. Triage issues semi-manually to get adapted to the tools of your choice. Work with teams, gain knowledge, and evolve with your system.